- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Izotope stutter edit tpb

- Chief architect premier x10 reviews

- In common alicia keys album cover

- Game frontend time gal

- Undisputed 2 free

- Mogalirekulu today episode nettv4u

- Free microsoft office publisher trial download

- 5th gen ipod touch prices

- Install apache spark on windows 8-1

- Install apache spark on windows 8.1 how to#

- Install apache spark on windows 8.1 install#

- Install apache spark on windows 8.1 update#

Install apache spark on windows 8.1 install#

first install it in the environment with conda install notebook.Optionally, if you want to use the Jupyter Notebook runtime of Spark:.The following environment variables need to be set:.

Install apache spark on windows 8.1 how to#

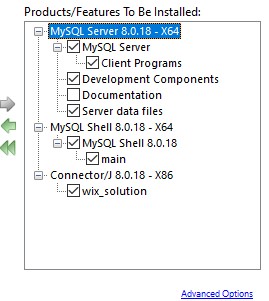

See Quick Install on how to set up a conda environment with.We recommend using conda to manage your Python environment on Windows. %HADOOP_HOME%\bin\winutils.exe chmod 777 /tmp/ %HADOOP_HOME%\bin\winutils.exe chmod 777 /tmp/hive To change the permissions by running the following commands: If you encounter issues with permissions to these folders, you might need.Install Microsoft Visual C++ 2010 Redistributed Package (圆4). Set/add environment variables for HADOOP_HOME to C:\hadoop and SPARK_HOME to C:\spark.Īdd %HADOOP_HOME%\bin and %SPARK_HOME%\bin to the PATH environment variable. You might have to change the hadoop version in the link, depending on which Spark version you are using.ĭownload Apache Spark 3.1.2 and extract it to C:\spark. Note: The version above is for Spark 3.1.2, which was built for Hadoop 3.2.0.Download the pre-compiled Hadoop binaries winutils.exe, hadoop.dll and put it in a folder called C:\hadoop\bin from.During installation after changing the path, select setting Path.Make sure you install it in the root of your main drive C:\java.In order to fully take advantage of Spark NLP on Windows (8 or 10), you need to setup/install Apache Spark, Apache Hadoop, Java and a Pyton environment correctly by following the following instructions: How to correctly install Spark NLP on Windowsįollow the below steps to set up Spark NLP with Spark 3.1.2: RUN adduser -disabled-password \ -gecos "Default user" \ -uid $ Windows Support

Install apache spark on windows 8.1 update#

RUN apt-get update & apt-get install -y \ tar \ To lanuch EMR cluster with Apache Spark/PySpark and Spark NLP correctly you need to have bootstrap and software configuration. NOTE: The EMR 6.0.0 is not supported by Spark NLP 3.4.3 How to create EMR cluster via CLI Spark NLP 3.4.3 has been tested and is compatible with the following EMR releases:

Note: You can import these notebooks by using their URLs. You can view all the Databricks notebooks from this address:

Please make sure you choose the correct Spark NLP Maven pacakge name for your runtime from our Pacakges Chetsheet Databricks Notebooks NOTE: Databrick’s runtimes support different Apache Spark major releases. Now you can attach your notebook to the cluster and use Spark NLP! Install New -> Maven -> Coordinates -> :spark-nlp_2.12:3.4.3 -> Install Install New -> PyPI -> spark-nlp -> Installģ.2. In Libraries tab inside your cluster you need to follow these steps:ģ.1. On a new cluster or existing one you need to add the following to the Advanced Options -> Spark tab: Install Spark NLP on DatabricksĬreate a cluster if you don’t have one already The only Databricks runtimes supporting CUDA 11 are 8.x and above as listed under GPU. NOTE: Spark NLP 3.4.3 is based on TensorFlow 2.4.x which is compatible with CUDA11 and cuDNN 8.0.2. Spark NLP 3.4.3 has been tested and is compatible with the following runtimes:

Spark NLP quick start on Kaggle Kernel is a live demo on Kaggle Kernel that performs named entity recognitions by using Spark NLP pretrained pipeline.

# Let's setup Kaggle for Spark NLP and PySpark !wget -O - | bash